Network & 3rd Party

Notices about service interruptions we are tracking including 3rd party events, and details / resolution where available.

Please check here to view status updates on known issues. In the event you are experiencing an issue not documented here, or require direct confirmation of resolution, please submit a ticket? For additional information on our ticket system, and support process, please review the details found here.

During ongoing issues, updates may be posted during the issue, with a final resolution posted following the resolution of the issue.

We may monitor issues which are outside of our network or control in this section as they may affect our clients directly as calls to / from impacted areas might be perceived as a problem on our network.

Pending steps will include a deep investigation of the carriers that failed, and the interdependencies this uncovered (where Carrier A actually seems to depend on Carrier B for some services), and what we can do to better isolate those interdependencies as well as re-striping (or redistributing) phone numbers across the carrier fabric for better resiliency in the event of a carrier failure.

2021.04.27 15:00 PST Carrier routes remain stable, no further reports have been received. We will be working on our own root cause analysis as well as providing some documentation on options to assist mitigation planning for clients who need to strategize to prevent issues like this.

2021.04.27 10:50 PST Outbound routes are restored. Capacity issues should be resolved. The carriers are reporting services are restored, but that the detailed RFO (explanation / reason for outage) will be published in 5 to 10 days. We will be updating our own plans and documentation with some mitigation strategy as a result of what we observed today. Thanks to everyone for their reports and understanding. We understand Rogers wireless customers may still be affected reaching some numbers, however this is likely something they may have to ask Rogers for support with if it continues. We were able to complete our own Rogers tests successfully at this time! More information will be provided as we collect it from the reporting carriers.

A DDOS affected our Vancouver POP breifly Tuesday afternoon (resolved within minutes of detection. The upstream facility manager was able to block the service very quickly and service was restored without action on our part. Prompt action from our network partners stopped the attack targeting the facility within minutes of detecting it.

The facility is undergoing some maintenance to upgrade core network router infrastructure.

The window is scheduled to occur on June 25th, 23:00 PST - June 26th 03:00 PST. During this time frame, Network Engineers will be upgrading router firmware and migrating router network connections to new-generation line cards. This is to help improve network performance and redundancy.

2020.06.19 RESOLVED.

After several attempts to work around the issue it became apparent the source of the issue was as the calls were leaving the callers network. The static injected on the line was added by their own carrier as they attempted to call other numbers that routed through the affected equipment in their network and at least one bad cable.

There were at least two separate problems within the network of the western ILEC.

There were two separate repairs (one on the night of the 17th (around 0100 AM PST on June 18) and one on the night of (around 0100 AM PST on June 19) that restored service.

Thank you to all our clients who reported the issue. Our own team conducted about 1,000 test calls in developing the data needed to diagnose the issue. We thank our partner carriers for their cooperation and their speed in resolving the difficult issue.

Due to the high demand being placed on cellular networks (in part, all of us working from home, compounded by call forwarding (which uses two channels for each call), the networks may not keep up with demand. See video or read on...

For the forseeable future, users of all telecom networks can expect to see intermittent congestion, but there are ways to help reduce the issues.

For Clients with SIP trunking, there is a lot of flexibility to how you transition to a work at home model. There are many factors to consider – the solutions presented here will indicate suggestions for methods and could be customized to work with your existing systems. This is not about being opportunistic. It’s about education, and enabling our Clients to continue to operate their businesses where possible from home or other remote locations. We understand that we are only relevant as long as we are helping our clients.

We are aware of an ongoing issue regarding one of our carrier parnters networks. There is a telecom switch in toronto which is affecting some of our Canadian inbound numbers as well as some of our toll free services. [Since resolved].

Many of these options have always existed, but evidensed by our Clients' enquiries, now more than ever we needed a detailed explanation of the options.

At BitBlock, we believe our Clients are the core of our business. We are committed to continuing to deliver the service you expect to assist you in providing your own clients and customers the service required for business continuity.

We are refining contingency plans for our Clients and others who may be trying to alter their work environments as they adopt “social distancing”, including many options to facilitate remote work / work from home options.

To all our Clients…

Our team at BitBlock is prepared to keep your critical systems stable through the current pandemic / health crisis.

Posting this later than desired - our goal will be to get initial notice posted within 15 minutes of detecting / confirming an issue.

The issue is now resolved (14:20PST) the issue affected a single switch, and was rather unusual in that at no point during the issue (excepting the period during the restart / re-registration of phones) did the switch show a markedly decreased call volume.

The following is a summary in reverse order of what we know about the ongoing, widespread telecommunication disruption affecting Canada. The effects are felt across the country with many companies unaware of the issue as their toll free or other services failed. In many cases partial failures are reported which is conbtributing to many people remaining unaware of the problem. We are hopeful the news we received that repairs are underway will prevent this problem from extending into Monday. If not, expect many more people to start paying attention as the offices open tomorrow.

2020.02.11 00:15 PST

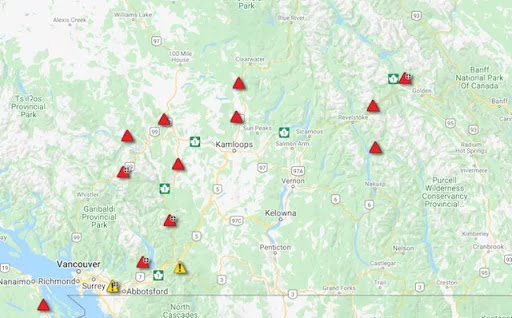

We are continuing to receive updates. The carriers are making progress, however repairs are about a day behind schedule - most operations are near normal, however it's still possible there will be additional disruption. Please check out the photos below for an idea of the scope of the problems. Multiple fiber cuts, and disruptions caused by weather related events span much of the province:

More photos below...

More photos below...